In a case study using our Zär dataset, we evaluate our tools by reproducing the pitch inventories from the original musicological study and subsequently discuss the potential of computer-assisted approaches for interdisciplinary research. Our tools were developed in close collaboration with ethnomusicologists and allow for incorporating domain knowledge (e.g., on singing styles or musically relevant harmonic intervals) in the different processing steps. As a second contribution, we introduce two computational tools for removing pitch slides and compensating pitch drifts in performances.

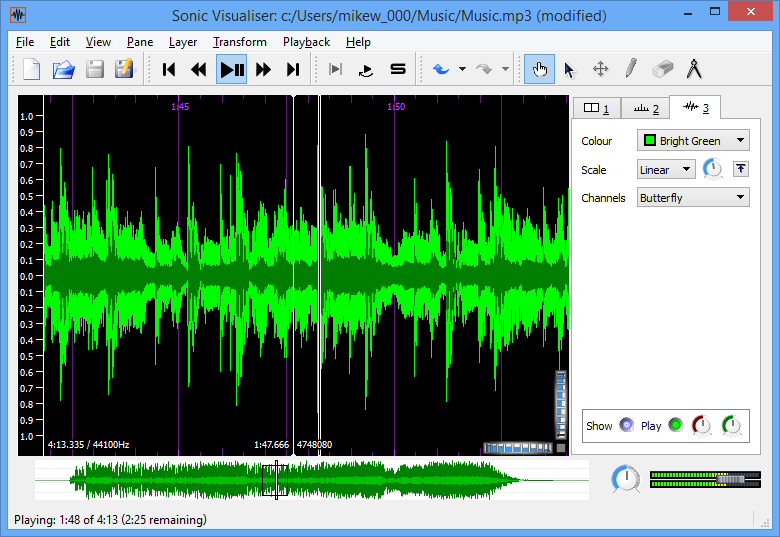

SONIC VISUALISER EXPORT VIDEO LICENSE

As one contribution of this article, we compile a dataset from the previously annotated audio material, which we release under an open-source license for research purposes. In this article, we study how musicological studies on field recordings can benefit from interactive computational tools that support such annotation processes. For instance, in the context of a previous musicological study on pitch inventories (or pitch-class histograms) of Zär performances, ethnomusicologists tediously annotated fundamental frequency (F0) trajectories, stable note events, and pitch drifts for a set of eleven multitrack field recordings.

SONIC VISUALISER EXPORT VIDEO MANUAL

These tasks typically require labor-intensive annotation processes with manual corrections executed by experts with domain knowledge. Musicological studies on tonal analysis or transcription have to account for such musical peculiarities, e.g., by compensating for pitch drifts or identifying stable note events (located between pitch slides). Furthermore, the singers tend to use pitch slides at the beginning and end of sung notes. Throughout a Zär performance, the singers often jointly and intentionally drift upwards in pitch. Three-voiced funeral songs from Svaneti in North-West Georgia (also referred to as Zär) are believed to represent one of Georgia’s oldest preserved forms of collective music-making. Furthermore, considering these two culturally different forms of vocal music as concrete application scenarios, we evaluate our interactive computational tools and demonstrate their potential for corpus-driven research in the field of computational ethnomusicology. As an additional contribution of this thesis, we introduce carefully organized and annotated multitrack research corpora of Western choral music and traditional Georgian vocal music, which are publicly accessible through interactive interfaces. However, such recordings are challenging to produce and thus of limited availability. Development and evaluation of computational tools for analyzing polyphonic singing typically require suitable multitrack recordings with one or several tracks per voice, e.g., obtained from close-up microphones attached to a singer’s head and neck. Furthermore, our tools offer interactive feedback mechanisms that allow domain experts to incorporate musical knowledge.

In particular, we develop musically motivated filtering techniques to detect stable regions in F0-trajectories and compensate for pitch drifts. As a third contribution of this thesis, we present computational tools for handling such peculiarities.

One major challenge for computational analysis of polyphonic singing constitute stylistic elements such as pitch slides and pitch drifts, which can introduce blurring in analysis results. In this way, our approach enables the analysis and exploration of large unlabeled audio collections. As a second contribution, we present an approach to assess the reliability of automatically extracted F0-estimates by fusing the outputs of several F0-estimation algorithms.

Computational analysis of polyphonic vocal music typically requires annotations of the singers’ fundamental frequency (F0) trajectories, which are labor-intensive to generate and may not be available for a particular recording collection. We show that our method can be used to adjust intonation (fine-tuning of pitch) in vocal recordings, e.g., in postproduction contexts. First, we develop an approach for applying time-varying pitch shifts to audio signals based on non-linear time-scale modification (TSM) and resampling techniques. This thesis contributes several computational tools for processing, analyzing, and exploring singing voice recordings using methods from signal processing, computer science, and music information retrieval (MIR). For studying performance aspects and cultural differences, the analysis of recorded audio material has become of increasing importance. Polyphonic vocal music is an integral part of music cultures around the world.